Kubernetes, KubeVirt and OpenStack Multi-Cluster Data Protection and Intelligent Recovery

Backup, Disaster and Ransomware Recovery, Migration and Mobility For DevOps, ITOps and Service Providers

4.9 out of 5 stars from 47 reviews

Newsletter April 2024

In this newsletter, we are excited to present a variety of content, ranging from Kubernetes backup FAQs to VMware to OpenStack migration, along with the Mobile World Congress Report.

Incremental vs. Differential Backup: Balancing Speed and Storage

Understand the differences between incremental vs. differential backup to improve your data protection strategy.

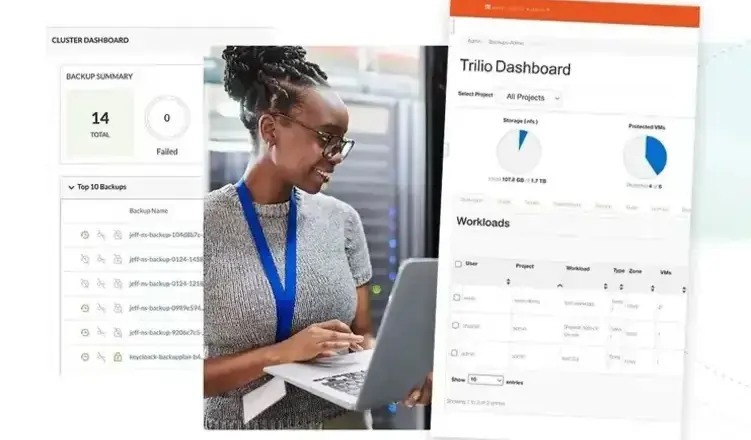

Cloud-Native by Design

Purpose built for the cloud-native era, Trilio protects the most demanding environments to maximize stability across all tenants.

Intelligent Recovery for Kubernetes Apps

Intelligent, enterprise-grade cloud-native protection for Red Hat, Canonical, Mirantis, Vmware, Nutanix, EKS, AKS, GKE, Suse, and other CNCF-compliant Kubernetes distributions.

Intelligent Recovery for OpenStack

Restore entire workloads with a single click and get complete visibility into OpenStack operations in a single view with the only backup and recovery solution built for TripleO, Red Hat OSP, Mirantis OpenStack, Canonical, Ansible, Kolla, Rackspace OpenStack.

Red Hat® OpenShift®

A unified platform to build, modernize, and deploy applications at scale. Work smarter and faster with a complete set of services for bringing apps to market on your choice of infrastructure.

Why Choose Us?

The Features | Modern Way Intelligent Recovery | Traditional Way Data-Centric Recovery |

|---|---|---|

Method | Self-Service | Administrated- Service |

Responsiveness | Recovered in background | 2 Day IT Ticket |

Orchestration | Automated / Tech Driven | Relying on few experts |

Your Data | Near-zero Risk | High Risk |

Recovery Time | Predictable | Unpredictable |

Compliance | Evidence | Questionable |

Don't let cloud outages cost you

While most enterprises are backing up their cloud applications, Dev Ops Leaders and IT Managers struggle to quickly recover data during outages or upgrades because of the:

- manual recovery processes

- multiple unavailable backups

- breaking recovery scripts

This creates loss, and days of downtime and puts the enterprises’ compliance at risk.

Goodbye, Traditional App Restores. Hello, Intelligent Recovery!

Minimize downtime. Maximize productivity and revenue.

Minimize downtime

To minimize the impact of any kind of outages and downtimes trilio provides the right capabilities to be on the proactive side.

Maximize productivity and revenue.

We're manually stitching files back together after outages.

Our recovery methods don't scale across the business.

Get near-zero RTO

With Trilio’s unique “Continuous Restore” you have the ability to recover data to a point in time that is almost identical to the state it was in immediately before the failure occurred, minimizing data loss and reducing the impact of downtime.

Ensure the continuity of your business operations.

Have evidence-based compliance

“Evidence-based compliance” is the process of collecting, analyzing, and documenting information needed, as evidence to demonstrate that an organization is adhering to a set of regulations or standards.

Reduce the risk of penalties and reputational damage.

We're manually stitching files back together after outages.

Protecting Your Enterprise with Trilio Is Easy.

Go from traditional application restores to intelligent recovery.

See how Trilio's intelligent recovery solution can help save your organization from unavailable backups and downtime.

Have one of our intelligent recovery experts get you a red-yellow-green assessment with specific recommendations.

Receive notifications on applications that have just been restored, instead of alerts on outages.

The World Has Changed

Traditional recovery approaches no longer work for the enterprise. Cloud-native or not, data loss is not an option. But with traditional recovery methods, data loss is a real risk. Trilio’s intelligent recovery approach gets your apps and data recovered in minutes, automatically, and in the background, with near zero RPO. Get the peace of mind that comes with knowing your apps and data is always recoverable, and your business can keep running smoothly in the cloud.

Our Experience Is Your Advantage

Hear what our incredible customers have to say!

Stay Up-to-Date with Our Latest Resources

Access our comprehensive collection today.